\n", - "

\n", - "However, **there are ways** to modify the mutable contents of the tuple without raising the `TypeError`, the solution is the `.extend()` method, or alternatively `.append()` (for lists):" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "tup = ([],)\n", - "print('tup before: ', tup)\n", - "tup[0].extend([1])\n", - "print('tup after: ', tup)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "tup before: ([],)\n", - "tup after: ([1],)\n" - ] - } - ], - "prompt_number": 44 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "tup = ([],)\n", - "print('tup before: ', tup)\n", - "tup[0].append(1)\n", - "print('tup after: ', tup)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "tup before: ([],)\n", - "tup after: ([1],)\n" - ] - } - ], - "prompt_number": 5 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "### Explanation\n", - "\n", - "**A. Jesse Jiryu Davis** has a nice explanation for this phenomenon (Original source: [http://emptysqua.re/blog/python-increment-is-weird-part-ii/](http://emptysqua.re/blog/python-increment-is-weird-part-ii/))\n", - "\n", - "If we try to extend the list via `+=` *\"then the statement executes `STORE_SUBSCR`, which calls the C function `PyObject_SetItem`, which checks if the object supports item assignment. In our case the object is a tuple, so `PyObject_SetItem` throws the `TypeError`. Mystery solved.\"*" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "#### One more note about the `immutable` status of tuples. Tuples are famous for being immutable. However, how comes that this code works?" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_tup = (1,)\n", - "my_tup += (4,)\n", - "my_tup = my_tup + (5,)\n", - "print(my_tup)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "(1, 4, 5)\n" - ] - } - ], - "prompt_number": 6 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "What happens \"behind\" the curtains is that the tuple is not modified, but every time a new object is generated, which will inherit the old \"name tag\":" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_tup = (1,)\n", - "print(id(my_tup))\n", - "my_tup += (4,)\n", - "print(id(my_tup))\n", - "my_tup = my_tup + (5,)\n", - "print(id(my_tup))" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "4337381840\n", - "4357415496\n", - "4357289952\n" - ] - } - ], - "prompt_number": 8 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## List comprehensions are fast, but generators are faster!?" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\"List comprehensions are fast, but generators are faster!?\" - No, not really (or significantly, see the benchmarks below). So what's the reason to prefer one over the other?\n", - "- use lists if you want to use the plethora of list methods \n", - "- use generators when you are dealing with huge collections to avoid memory issues" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def plainlist(n=100000):\n", - " my_list = []\n", - " for i in range(n):\n", - " if i % 5 == 0:\n", - " my_list.append(i)\n", - " return my_list\n", - "\n", - "def listcompr(n=100000):\n", - " my_list = [i for i in range(n) if i % 5 == 0]\n", - " return my_list\n", - "\n", - "def generator(n=100000):\n", - " my_gen = (i for i in range(n) if i % 5 == 0)\n", - " return my_gen\n", - "\n", - "def generator_yield(n=100000):\n", - " for i in range(n):\n", - " if i % 5 == 0:\n", - " yield i" - ], - "language": "python", - "metadata": {}, - "outputs": [], - "prompt_number": 11 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "#### To be fair to the list, let us exhaust the generators:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def test_plainlist(plain_list):\n", - " for i in plain_list():\n", - " pass\n", - "\n", - "def test_listcompr(listcompr):\n", - " for i in listcompr():\n", - " pass\n", - "\n", - "def test_generator(generator):\n", - " for i in generator():\n", - " pass\n", - "\n", - "def test_generator_yield(generator_yield):\n", - " for i in generator_yield():\n", - " pass\n", - "\n", - "print('plain_list: ', end = '')\n", - "%timeit test_plainlist(plainlist)\n", - "print('\\nlistcompr: ', end = '')\n", - "%timeit test_listcompr(listcompr)\n", - "print('\\ngenerator: ', end = '')\n", - "%timeit test_generator(generator)\n", - "print('\\ngenerator_yield: ', end = '')\n", - "%timeit test_generator_yield(generator_yield)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "plain_list: 10 loops, best of 3: 22.4 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "listcompr: 10 loops, best of 3: 20.8 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "generator: 10 loops, best of 3: 22 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "generator_yield: 10 loops, best of 3: 21.9 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 13 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Public vs. private class methods and name mangling\n", - "\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Who has not stumbled across this quote \"we are all consenting adults here\" in the Python community, yet? Unlike in other languages like C++ (sorry, there are many more, but that's one I am most familiar with), we can't really protect class methods from being used outside the class (i.e., by the API user). \n", - "All we can do is to indicate methods as private to make clear that they are better not used outside the class, but it is really up to the class user, since \"we are all consenting adults here\"! \n", - "So, when we want to mark a class method as private, we can put a single underscore in front of it. \n", - "If we additionally want to avoid name clashes with other classes that might use the same method names, we can prefix the name with a double-underscore to invoke the name mangling.\n", - "\n", - "This doesn't prevent the class user to access this class member though, but he has to know the trick and also knows that it his own risk...\n", - "\n", - "Let the following example illustrate what I mean:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "class my_class():\n", - " def public_method(self):\n", - " print('Hello public world!')\n", - " def __private_method(self):\n", - " print('Hello private world!')\n", - " def call_private_method_in_class(self):\n", - " self.__private_method()\n", - " \n", - "my_instance = my_class()\n", - "\n", - "my_instance.public_method()\n", - "my_instance._my_class__private_method()\n", - "my_instance.call_private_method_in_class()" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "Hello public world!\n", - "Hello private world!\n", - "Hello private world!\n" - ] - } - ], - "prompt_number": 11 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## The consequences of modifying a list when looping through it" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "It can be really dangerous to modify a list when iterating through it - this is a very common pitfall that can cause unintended behavior! \n", - "Look at the following examples, and for a fun exercise: try to figure out what is going on before you skip to the solution!" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "a = [1, 2, 3, 4, 5]\n", - "for i in a:\n", - " if not i % 2:\n", - " a.remove(i)\n", - "print(a)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "[1, 3, 5]\n" - ] - } - ], - "prompt_number": 3 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "b = [2, 4, 5, 6]\n", - "for i in b:\n", - " if not i % 2:\n", - " b.remove(i)\n", - "print(b)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "[4, 5]\n" - ] - } - ], - "prompt_number": 4 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "**The solution** is that we are iterating through the list index by index, and if we remove one of the items in-between, we inevitably mess around with the indexing, look at the following example, and it will become clear:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "b = [2, 4, 5, 6]\n", - "for index, item in enumerate(b):\n", - " print(index, item)\n", - " if not item % 2:\n", - " b.remove(item)\n", - "print(b)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "0 2\n", - "1 5\n", - "2 6\n", - "[4, 5]\n" - ] - } - ], - "prompt_number": 7 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Dynamic binding and typos in variable names\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Be careful, dynamic binding is convenient, but can also quickly become dangerous!" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "print('first list:')\n", - "for i in range(3):\n", - " print(i)\n", - " \n", - "print('\\nsecond list:')\n", - "for j in range(3):\n", - " print(i) # I (intentionally) made typo here!" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "first list:\n", - "0\n", - "1\n", - "2\n", - "\n", - "second list:\n", - "2\n", - "2\n", - "2\n" - ] - } - ], - "prompt_number": 14 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## List slicing using indexes that are \"out of range\"" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "As we have all encountered it 1 (x10000) time(s) in our live, the infamous `IndexError`:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_list = [1, 2, 3, 4, 5]\n", - "print(my_list[5])" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "ename": "IndexError", - "evalue": "list index out of range", - "output_type": "pyerr", - "traceback": [ - "\u001b[0;31m---------------------------------------------------------------------------\u001b[0m\n\u001b[0;31mIndexError\u001b[0m Traceback (most recent call last)", - "\u001b[0;32m

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Reusing global variable names and `UnboundLocalErrors`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Usually, it is no problem to access global variables in the local scope of a function:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def my_func():\n", - " print(var)\n", - "\n", - "var = 'global'\n", - "my_func()" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "global\n" - ] - } - ], - "prompt_number": 37 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "And is also no problem to use the same variable name in the local scope without affecting the local counterpart: " - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def my_func():\n", - " var = 'locally changed'\n", - "\n", - "var = 'global'\n", - "my_func()\n", - "print(var)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "global\n" - ] - } - ], - "prompt_number": 38 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "But we have to be careful if we use a variable name that occurs in the global scope, and we want to access it in the local function scope if we want to reuse this name:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def my_func():\n", - " print(var) # want to access global variable\n", - " var = 'locally changed' # but Python thinks we forgot to define the local variable!\n", - " \n", - "var = 'global'\n", - "my_func()" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "ename": "UnboundLocalError", - "evalue": "local variable 'var' referenced before assignment", - "output_type": "pyerr", - "traceback": [ - "\u001b[0;31m---------------------------------------------------------------------------\u001b[0m\n\u001b[0;31mUnboundLocalError\u001b[0m Traceback (most recent call last)", - "\u001b[0;32m

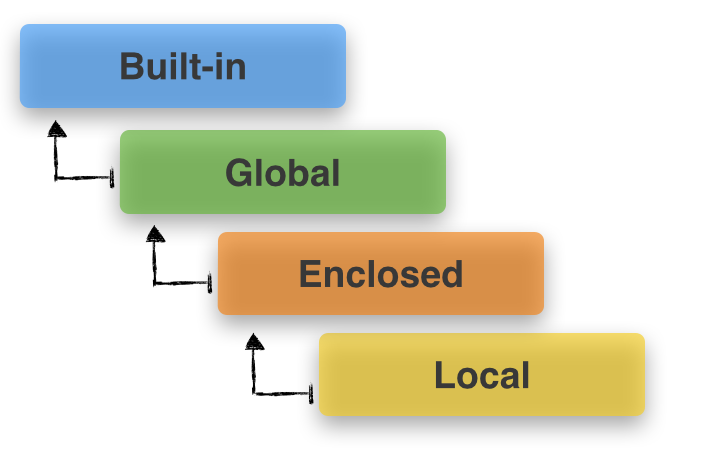

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Creating copies of mutable objects\n", - "\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Let's assume a scenario where we want to duplicate sub`list`s of values stored in another list. If we want to create independent sub`list` object, using the arithmetic multiplication operator could lead to rather unexpected (or undesired) results:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_list1 = [[1, 2, 3]] * 2\n", - "\n", - "print('initially ---> ', my_list1)\n", - "\n", - "# modify the 1st element of the 2nd sublist\n", - "my_list1[1][0] = 'a'\n", - "print(\"after my_list1[1][0] = 'a' ---> \", my_list1)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "initially ---> [[1, 2, 3], [1, 2, 3]]\n", - "after my_list1[1][0] = 'a' ---> [['a', 2, 3], ['a', 2, 3]]\n" - ] - } - ], - "prompt_number": 24 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "In this case, we should better create \"new\" objects:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_list2 = [[1, 2, 3] for i in range(2)]\n", - "\n", - "print('initially: ---> ', my_list2)\n", - "\n", - "# modify the 1st element of the 2nd sublist\n", - "my_list2[1][0] = 'a'\n", - "print(\"after my_list2[1][0] = 'a': ---> \", my_list2)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "initially: ---> [[1, 2, 3], [1, 2, 3]]\n", - "after my_list2[1][0] = 'a': ---> [[1, 2, 3], ['a', 2, 3]]\n" - ] - } - ], - "prompt_number": 25 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "And here is the proof:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "for a,b in zip(my_list1, my_list2):\n", - " print('id my_list1: {}, id my_list2: {}'.format(id(a), id(b)))" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "id my_list1: 4350764680, id my_list2: 4350766472\n", - "id my_list1: 4350764680, id my_list2: 4350766664\n" - ] - } - ], - "prompt_number": 26 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Key differences between Python 2 and 3\n", - "\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "There are some good articles already that are summarizing the differences between Python 2 and 3, e.g., \n", - "- [https://wiki.python.org/moin/Python2orPython3](https://wiki.python.org/moin/Python2orPython3)\n", - "- [https://docs.python.org/3.0/whatsnew/3.0.html](https://docs.python.org/3.0/whatsnew/3.0.html)\n", - "- [http://python3porting.com/differences.html](http://python3porting.com/differences.html)\n", - "- [https://docs.python.org/3/howto/pyporting.html](https://docs.python.org/3/howto/pyporting.html) \n", - "etc.\n", - "\n", - "But it might be still worthwhile, especially for Python newcomers, to take a look at some of those!\n", - "(Note: the the code was executed in Python 3.4.0 and Python 2.7.5 and copied from interactive shell sessions.)" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "### Unicode...\n", - "####- Python 2: \n", - "We have ASCII `str()` types, separate `unicode()`, but no `byte` type\n", - "####- Python 3: \n", - "Now, we finally have Unicode (utf-8) `str`ings, and 2 byte classes: `byte` and `bytearray`s" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "#############\n", - "# Python 2\n", - "#############\n", - "\n", - ">>> type(unicode('is like a python3 str()'))\n", - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Function annotations - What are those `->`'s in my Python code?\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Have you ever seen any Python code that used colons inside the parantheses of a function definition?" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def foo1(x: 'insert x here', y: 'insert x^2 here'):\n", - " print('Hello, World')\n", - " return" - ], - "language": "python", - "metadata": {}, - "outputs": [], - "prompt_number": 8 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "And what about the fancy arrow here?" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def foo2(x, y) -> 'Hi!':\n", - " print('Hello, World')\n", - " return" - ], - "language": "python", - "metadata": {}, - "outputs": [], - "prompt_number": 10 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Q: Is this valid Python syntax? \n", - "A: Yes!\n", - " \n", - " \n", - "Q: So, what happens if I *just call* the function? \n", - "A: Nothing!\n", - " \n", - "Here is the proof!" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "foo1(1,2)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "Hello, World\n" - ] - } - ], - "prompt_number": 9 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "foo2(1,2) " - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "Hello, World\n" - ] - } - ], - "prompt_number": 11 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "**So, those are function annotations ... ** \n", - "- the colon for the function parameters \n", - "- the arrow for the return value \n", - "\n", - "You probably will never make use of them (or at least very rarely). Usually, we write good function documentations below the function as a docstring - or at least this is how I would do it (okay this case is a little bit extreme, I have to admit):" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def is_palindrome(a):\n", - " \"\"\"\n", - " Case-and punctuation insensitive check if a string is a palindrom.\n", - " \n", - " Keyword arguments:\n", - " a (str): The string to be checked if it is a palindrome.\n", - " \n", - " Returns `True` if input string is a palindrome, else False.\n", - " \n", - " \"\"\"\n", - " stripped_str = [l for l in my_str.lower() if l.isalpha()]\n", - " return stripped_str == stripped_str[::-1]\n", - " " - ], - "language": "python", - "metadata": {}, - "outputs": [] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "However, function annotations can be useful to indicate that work is still in progress in some cases. But they are optional and I see them very very rarely.\n", - "\n", - "As it is stated in [PEP3107](http://legacy.python.org/dev/peps/pep-3107/#fundamentals-of-function-annotations):\n", - "\n", - "1. Function annotations, both for parameters and return values, are completely optional.\n", - "\n", - "2. Function annotations are nothing more than a way of associating arbitrary Python expressions with various parts of a function at compile-time.\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "The nice thing about function annotations is their `__annotations__` attribute, which is dictionary of all the parameters and/or the `return` value you annotated." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "foo1.__annotations__" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "metadata": {}, - "output_type": "pyout", - "prompt_number": 17, - "text": [ - "{'y': 'insert x^2 here', 'x': 'insert x here'}" - ] - } - ], - "prompt_number": 17 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "foo2.__annotations__" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "metadata": {}, - "output_type": "pyout", - "prompt_number": 18, - "text": [ - "{'return': 'Hi!'}" - ] - } - ], - "prompt_number": 18 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "**When are they useful?**" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Function annotations can be useful for a couple of things \n", - "- Documentation in general\n", - "- pre-condition testing\n", - "- [type checking](http://legacy.python.org/dev/peps/pep-0362/#annotation-checker)\n", - " \n", - "..." - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Abortive statements in `finally` blocks" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Python's `try-except-finally` blocks are very handy for catching and handling errors. The `finally` block is always executed whether an `exception` has been raised or not as illustrated in the following example." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def try_finally1():\n", - " try:\n", - " print('in try:')\n", - " print('do some stuff')\n", - " float('abc')\n", - " except ValueError:\n", - " print('an error occurred')\n", - " else:\n", - " print('no error occurred')\n", - " finally:\n", - " print('always execute finally')\n", - " \n", - "try_finally1()" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "in try:\n", - "do some stuff\n", - "an error occurred\n", - "always execute finally\n" - ] - } - ], - "prompt_number": 24 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "But can you also guess what will be printed in the next code cell?" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def try_finally2():\n", - " try:\n", - " print(\"do some stuff in try block\")\n", - " return \"return from try block\"\n", - " finally:\n", - " print(\"do some stuff in finally block\")\n", - " return \"always execute finally\"\n", - " \n", - "print(try_finally2())" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "do some stuff in try block\n", - "do some stuff in finally block\n", - "always execute finally\n" - ] - } - ], - "prompt_number": 21 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "Here, the abortive `return` statement in the `finally` block simply overrules the `return` in the `try` block, since **`finally` is guaranteed to always be executed.** So, be careful using abortive statements in `finally` blocks!" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "

\n", - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "#Assigning types to variables as values" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "I am not yet sure in which context this can be useful, but it is a nice fun fact to know that we can assign types as values to variables." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "a_var = str\n", - "a_var(123)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "metadata": {}, - "output_type": "pyout", - "prompt_number": 1, - "text": [ - "'123'" - ] - } - ], - "prompt_number": 1 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "from random import choice\n", - "\n", - "a, b, c = float, int, str\n", - "for i in range(5):\n", - " j = choice([a,b,c])(i)\n", - " print(j, type(j))" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "0

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "##Keyword argument unpacking syntax - `*args` and `**kwargs`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Python has a very convenient \"keyword argument unpacking syntax\" (often also referred to as \"splat\"-operators). This is particularly useful, if we want to define a function that can take a arbitrary number of input arguments." - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "#### Single-asterisk (*args)" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "def a_func(*args):\n", - " print('type of args:', type(args))\n", - " print('args contents:', args)\n", - " print('1st argument:', args[0])\n", - "\n", - "a_func(0, 1, 'a', 'b', 'c')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "type of args:

\n", - "

\n", - "

\n", - "

\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "# Changelog" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "#### 05/01/2014\n", - "- new section: keyword argument unpacking syntax\n", - "\n", - "#### 04/27/2014\n", - "- minor fixes of typos\n", - "- single- vs. double-underscore clarification in the private class section." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [], - "language": "python", - "metadata": {}, - "outputs": [], - "prompt_number": 1 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [], - "language": "python", - "metadata": {}, - "outputs": [] - } - ], - "metadata": {} - } - ] -} \ No newline at end of file diff --git a/.ipynb_checkpoints/palindrome_timeit-checkpoint.ipynb b/.ipynb_checkpoints/palindrome_timeit-checkpoint.ipynb deleted file mode 100644 index ef0e33f..0000000 --- a/.ipynb_checkpoints/palindrome_timeit-checkpoint.ipynb +++ /dev/null @@ -1,194 +0,0 @@ -{ - "metadata": { - "name": "", - "signature": "sha256:6ea19109869c82ee989c8ea0599ec49401e74246a542ad0b7b05f6ef464bda19" - }, - "nbformat": 3, - "nbformat_minor": 0, - "worksheets": [ - { - "cells": [ - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Sebastian Raschka 04/2014\n", - "\n", - "#Timing different Implementations of palindrome functions" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import re\n", - "import timeit\n", - "import string\n", - "\n", - "# All functions return True if an input string is a palindrome. Else returns False.\n", - "\n", - "\n", - "\n", - "####\n", - "#### case-insensitive ignoring punctuation characters\n", - "####\n", - "\n", - "def palindrome_short(my_str):\n", - " stripped_str = \"\".join(l.lower() for l in my_str if l.isalpha())\n", - " return stripped_str == stripped_str[::-1]\n", - "\n", - "def palindrome_regex(my_str):\n", - " return re.sub('\\W', '', my_str.lower()) == re.sub('\\W', '', my_str[::-1].lower())\n", - "\n", - "def palindrome_stringlib(my_str):\n", - " LOWERS = set(string.ascii_lowercase)\n", - " letters = [c for c in my_str.lower() if c in LOWERS]\n", - " return letters == letters[::-1]\n", - "\n", - "LOWERS = set(string.ascii_lowercase)\n", - "def palindrome_stringlib2(my_str):\n", - " letters = [c for c in my_str.lower() if c in LOWERS]\n", - " return letters == letters[::-1]\n", - "\n", - "def palindrome_isalpha(my_str):\n", - " stripped_str = [l for l in my_str.lower() if l.isalpha()]\n", - " return stripped_str == stripped_str[::-1]\n", - "\n", - "\n", - "\n", - "####\n", - "#### functions considering all characters (case-sensitive)\n", - "####\n", - "\n", - "def palindrome_reverse1(my_str):\n", - " return my_str == my_str[::-1]\n", - "\n", - "def palindrome_reverse2(my_str):\n", - " return my_str == ''.join(reversed(my_str))\n", - "\n", - "def palindrome_recurs(my_str):\n", - " if len(my_str) < 2:\n", - " return True\n", - " if my_str[0] != my_str[-1]:\n", - " return False\n", - " return palindrome(my_str[1:-1])\n", - "\n", - "\n", - "\n" - ], - "language": "python", - "metadata": {}, - "outputs": [], - "prompt_number": 10 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "test_str = \"Go hang a salami. I'm a lasagna hog.\"\n", - "\n", - "print('case-insensitive functions ignoring punctuation characters')\n", - "%timeit palindrome_short(test_str)\n", - "%timeit palindrome_regex(test_str)\n", - "%timeit palindrome_stringlib(test_str)\n", - "%timeit palindrome_stringlib2(test_str)\n", - "%timeit palindrome_isalpha(test_str)\n", - "\n", - "print('\\n\\nfunctions considering all characters (case-sensitive)')\n", - "%timeit palindrome_reverse1(test_str)\n", - "%timeit palindrome_reverse2(test_str)\n", - "%timeit palindrome_recurs(test_str)\n" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "case-insensitive functions ignoring punctuation characters\n", - "100000 loops, best of 3: 15.3 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 19.9 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 13.5 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 8.58 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 9.42 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "\n", - "functions considering all characters (case-sensitive)\n", - "1000000 loops, best of 3: 508 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 3.08 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "1000000 loops, best of 3: 480 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 11 - }, - { - "cell_type": "code", - "collapsed": false, - "input": [], - "language": "python", - "metadata": {}, - "outputs": [] - } - ], - "metadata": {} - } - ] -} \ No newline at end of file diff --git a/.ipynb_checkpoints/python_true_false-checkpoint.ipynb b/.ipynb_checkpoints/python_true_false-checkpoint.ipynb deleted file mode 100644 index b2786a2..0000000 --- a/.ipynb_checkpoints/python_true_false-checkpoint.ipynb +++ /dev/null @@ -1,578 +0,0 @@ -{ - "metadata": { - "name": "" - }, - "nbformat": 3, - "nbformat_minor": 0, - "worksheets": [ - { - "cells": [ - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Sebastian Raschka, 03/2014 \n", - "Code was executed in Python 3.4.0" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "###`True` and `False` in the `datetime` module\n", - "\n", - "Pointed out in a nice article **\"A false midnight\"** at [http://lwn.net/SubscriberLink/590299/bf73fe823974acea/](http://lwn.net/SubscriberLink/590299/bf73fe823974acea/):\n", - "\n", - "*\"it often comes as a big surprise for programmers to find (sometimes by way of a hard-to-reproduce bug) that, \n", - "unlike any other time value, midnight (i.e. datetime.time(0,0,0)) is False. \n", - "A long discussion on the python-ideas mailing list shows that, while surprising, \n", - "that behavior is desirable\u2014at least in some quarters.\"*" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import datetime\n", - "\n", - "print('\"datetime.time(0,0,0)\" (Midnight) evaluates to', bool(datetime.time(0,0,0)))\n", - "\n", - "print('\"datetime.time(1,0,0)\" (1 am) evaluates to', bool(datetime.time(1,0,0)))" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\"datetime.time(0,0,0)\" (Midnight) evaluates to False\n", - "\"datetime.time(1,0,0)\" (1 am) evaluates to True\n" - ] - } - ], - "prompt_number": 17 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Boolean `True`" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_true_val = True\n", - "\n", - "\n", - "print('my_true_val == True:', my_true_val == True)\n", - "print('my_true_val is True:', my_true_val is True)\n", - "\n", - "print('my_true_val == None:', my_true_val == None)\n", - "print('my_true_val is None:', my_true_val is None)\n", - "\n", - "print('my_true_val == False:', my_true_val == False)\n", - "print('my_true_val is False:', my_true_val is False)\n", - "\n", - "print(my_true_val\n", - "if my_true_val:\n", - " print('\"if my_true_val:\" is True')\n", - "else:\n", - " print('\"if my_true_val:\" is False')\n", - " \n", - "if not my_true_val:\n", - " print('\"if not my_true_val:\" is True')\n", - "else:\n", - " print('\"if not my_true_val:\" is False')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_true_val == True: True\n", - "my_true_val is True: True\n", - "my_true_val == None: False\n", - "my_true_val is None: False\n", - "my_true_val == False: False\n", - "my_true_val is False: False\n", - "\"if my_true_val:\" is True\n", - "\"if not my_true_val:\" is False\n" - ] - } - ], - "prompt_number": 83 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Boolean `False`" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_false_val = False\n", - "\n", - "\n", - "print('my_false_val == True:', my_false_val == True)\n", - "print('my_false_val is True:', my_false_val is True)\n", - "\n", - "print('my_false_val == None:', my_false_val == None)\n", - "print('my_false_val is None:', my_false_val is None)\n", - "\n", - "print('my_false_val == False:', my_false_val == False)\n", - "print('my_false_val is False:', my_false_val is False)\n", - "\n", - "\n", - "if my_false_val:\n", - " print('\"if my_false_val:\" is True')\n", - "else:\n", - " print('\"if my_false_val:\" is False')\n", - " \n", - "if not my_false_val:\n", - " print('\"if not my_false_val:\" is True')\n", - "else:\n", - " print('\"if not my_false_val:\" is False')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_false_val == True: False\n", - "my_false_val is True: False\n", - "my_false_val == None: False\n", - "my_false_val is None: False\n", - "my_false_val == False: True\n", - "my_false_val is False: True\n", - "\"if my_false_val:\" is False\n", - "\"if not my_false_val:\" is True\n" - ] - } - ], - "prompt_number": 76 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## `None` 'value'" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_none_var = None\n", - "\n", - "print('my_none_var == True:', my_none_var == True)\n", - "print('my_none_var is True:', my_none_var is True)\n", - "\n", - "print('my_none_var == None:', my_none_var == None)\n", - "print('my_none_var is None:', my_none_var is None)\n", - "\n", - "print('my_none_var == False:', my_none_var == False)\n", - "print('my_none_var is False:', my_none_var is False)\n", - "\n", - "\n", - "if my_none_var:\n", - " print('\"if my_none_var:\" is True')\n", - "else:\n", - " print('\"if my_none_var:\" is False')\n", - "\n", - "if not my_none_var:\n", - " print('\"if not my_none_var:\" is True')\n", - "else:\n", - " print('\"if not my_none_var:\" is False')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_none_var == True: False\n", - "my_none_var is True: False\n", - "my_none_var == None: True\n", - "my_none_var is None: True\n", - "my_none_var == False: False\n", - "my_none_var is False: False\n", - "\"if my_none_var:\" is False\n", - "\"if not my_none_var:\" is True\n" - ] - } - ], - "prompt_number": 62 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Empty String" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_empty_string = \"\"\n", - "\n", - "print('my_empty_string == True:', my_empty_string == True)\n", - "print('my_empty_string is True:', my_empty_string is True)\n", - "\n", - "print('my_empty_string == None:', my_empty_string == None)\n", - "print('my_empty_string is None:', my_empty_string is None)\n", - "\n", - "print('my_empty_string == False:', my_empty_string == False)\n", - "print('my_empty_string is False:', my_empty_string is False)\n", - "\n", - "\n", - "if my_empty_string:\n", - " print('\"if my_empty_string:\" is True')\n", - "else:\n", - " print('\"if my_empty_string:\" is False')\n", - " \n", - "if not my_empty_string:\n", - " print('\"if not my_empty_string:\" is True')\n", - "else:\n", - " print('\"if not my_empty_string:\" is False')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_empty_string == True: False\n", - "my_empty_string is True: False\n", - "my_empty_string == None: False\n", - "my_empty_string is None: False\n", - "my_empty_string == False: False\n", - "my_empty_string is False: False\n", - "\"if my_empty_string:\" is False\n", - "\"if my_empty_string:\" is True\n" - ] - } - ], - "prompt_number": 61 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## Empty List\n", - "It is generally not a good idea to use the `==` to check for empty lists..." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_empty_list = []\n", - "\n", - "\n", - "print('my_empty_list == True:', my_empty_list == True)\n", - "print('my_empty_list is True:', my_empty_list is True)\n", - "\n", - "print('my_empty_list == None:', my_empty_list == None)\n", - "print('my_empty_list is None:', my_empty_list is None)\n", - "\n", - "print('my_empty_list == False:', my_empty_list == False)\n", - "print('my_empty_list is False:', my_empty_list is False)\n", - "\n", - "\n", - "if my_empty_list:\n", - " print('\"if my_empty_list:\" is True')\n", - "else:\n", - " print('\"if my_empty_list:\" is False')\n", - " \n", - "if not my_empty_list:\n", - " print('\"if not my_empty_list:\" is True')\n", - "else:\n", - " print('\"if not my_empty_list:\" is False')\n", - "\n", - "\n", - " \n" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_empty_list == True: False\n", - "my_empty_list is True: False\n", - "my_empty_list == None: False\n", - "my_empty_list is None: False\n", - "my_empty_list == False: False\n", - "my_empty_list is False: False\n", - "\"if my_empty_list:\" is False\n", - "\"if not my_empty_list:\" is True\n" - ] - } - ], - "prompt_number": 67 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## [0]-List" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_zero_list = [0]\n", - "\n", - "\n", - "print('my_zero_list == True:', my_zero_list == True)\n", - "print('my_zero_list is True:', my_zero_list is True)\n", - "\n", - "print('my_zero_list == None:', my_zero_list == None)\n", - "print('my_zero_list is None:', my_zero_list is None)\n", - "\n", - "print('my_zero_list == False:', my_zero_list == False)\n", - "print('my_zero_list is False:', my_zero_list is False)\n", - "\n", - "\n", - "if my_zero_list:\n", - " print('\"if my_zero_list:\" is True')\n", - "else:\n", - " print('\"if my_zero_list:\" is False')\n", - " \n", - "if not my_zero_list:\n", - " print('\"if not my_zero_list:\" is True')\n", - "else:\n", - " print('\"if not my_zero_list:\" is False')" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_zero_list == True: False\n", - "my_zero_list is True: False\n", - "my_zero_list == None: False\n", - "my_zero_list is None: False\n", - "my_zero_list == False: False\n", - "my_zero_list is False: False\n", - "\"if my_zero_list:\" is True\n", - "\"if not my_zero_list:\" is False\n" - ] - } - ], - "prompt_number": 70 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "## List comparison \n", - "List comparisons are a handy way to show the difference between `==` and `is`. \n", - "While `==` is rather evaluating the equality of the value, `is` is checking if two objects are equal.\n", - "The examples below show that we can assign a pointer to the same list object by using `=`, e.g., `list1 = list2`. \n", - "a) If we want to make a **shallow** copy of the list values, we have to make a little tweak: `list1 = list2[:]`, or \n", - "b) a **deepcopy** via `list1 = copy.deepcopy(list2)`\n", - "\n", - "Possibly the best explanation of shallow vs. deep copies I've read so far:\n", - "\n", - "*** \"Shallow copies duplicate as little as possible. A shallow copy of a collection is a copy of the collection structure, not the elements. With a shallow copy, two collections now share the individual elements.\n", - "Deep copies duplicate everything. A deep copy of a collection is two collections with all of the elements in the original collection duplicated.\"***\n", - "\n", - "(via [S.Lott](http://stackoverflow.com/users/10661/s-lott) on [StackOverflow](http://stackoverflow.com/questions/184710/what-is-the-difference-between-a-deep-copy-and-a-shallow-copy))" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "###a) Shallow vs. deep copies for simple elements \n", - "List modification of the original list doesn't affect \n", - "shallow copies or deep copies if the list contains literals." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "from copy import deepcopy\n", - "\n", - "my_first_list = [1]\n", - "my_second_list = [1]\n", - "print('my_first_list == my_second_list:', my_first_list == my_second_list)\n", - "print('my_first_list is my_second_list:', my_first_list is my_second_list)\n", - "\n", - "my_third_list = my_first_list\n", - "print('my_first_list == my_third_list:', my_first_list == my_third_list)\n", - "print('my_first_list is my_third_list:', my_first_list is my_third_list)\n", - "\n", - "my_shallow_copy = my_first_list[:]\n", - "print('my_first_list == my_shallow_copy:', my_first_list == my_shallow_copy)\n", - "print('my_first_list is my_shallow_copy:', my_first_list is my_shallow_copy)\n", - "\n", - "my_deep_copy = deepcopy(my_first_list)\n", - "print('my_first_list == my_deep_copy:', my_first_list == my_deep_copy)\n", - "print('my_first_list is my_deep_copy:', my_first_list is my_deep_copy)\n", - "\n", - "print('\\nmy_third_list:', my_third_list)\n", - "print('my_shallow_copy:', my_shallow_copy)\n", - "print('my_deep_copy:', my_deep_copy)\n", - "\n", - "my_first_list[0] = 2\n", - "print('after setting \"my_first_list[0] = 2\"')\n", - "print('my_third_list:', my_third_list)\n", - "print('my_shallow_copy:', my_shallow_copy)\n", - "print('my_deep_copy:', my_deep_copy)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_first_list == my_second_list: True\n", - "my_first_list is my_second_list: False\n", - "my_first_list == my_third_list: True\n", - "my_first_list is my_third_list: True\n", - "my_first_list == my_shallow_copy: True\n", - "my_first_list is my_shallow_copy: False\n", - "my_first_list == my_deep_copy: True\n", - "my_first_list is my_deep_copy: False\n", - "\n", - "my_third_list: [1]\n", - "my_shallow_copy: [1]\n", - "my_deep_copy: [1]\n", - "after setting \"my_first_list[0] = 2\"\n", - "my_third_list: [2]\n", - "my_shallow_copy: [1]\n", - "my_deep_copy: [1]\n" - ] - } - ], - "prompt_number": 11 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "### b) Shallow vs. deep copies if list contains other structures and objects\n", - "List modification of the original list does affect \n", - "shallow copies, but not deep copies if the list contains compound objects." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "my_first_list = [[1],[2]]\n", - "my_second_list = [[1],[2]]\n", - "print('my_first_list == my_second_list:', my_first_list == my_second_list)\n", - "print('my_first_list is my_second_list:', my_first_list is my_second_list)\n", - "\n", - "my_third_list = my_first_list\n", - "print('my_first_list == my_third_list:', my_first_list == my_third_list)\n", - "print('my_first_list is my_third_list:', my_first_list is my_third_list)\n", - "\n", - "my_shallow_copy = my_first_list[:]\n", - "print('my_first_list == my_shallow_copy:', my_first_list == my_shallow_copy)\n", - "print('my_first_list is my_shallow_copy:', my_first_list is my_shallow_copy)\n", - "\n", - "my_deep_copy = deepcopy(my_first_list)\n", - "print('my_first_list == my_deep_copy:', my_first_list == my_deep_copy)\n", - "print('my_first_list is my_deep_copy:', my_first_list is my_deep_copy)\n", - "\n", - "print('\\nmy_third_list:', my_third_list)\n", - "print('my_shallow_copy:', my_shallow_copy)\n", - "print('my_deep_copy:', my_deep_copy)\n", - "\n", - "my_first_list[0][0] = 2\n", - "print('after setting \"my_first_list[0][0] = 2\"')\n", - "print('my_third_list:', my_third_list)\n", - "print('my_shallow_copy:', my_shallow_copy)\n", - "print('my_deep_copy:', my_deep_copy)" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "my_first_list == my_second_list: True\n", - "my_first_list is my_second_list: False\n", - "my_first_list == my_third_list: True\n", - "my_first_list is my_third_list: True\n", - "my_first_list == my_shallow_copy: True\n", - "my_first_list is my_shallow_copy: False\n", - "my_first_list == my_deep_copy: True\n", - "my_first_list is my_deep_copy: False\n", - "\n", - "my_third_list: [[1], [2]]\n", - "my_shallow_copy: [[1], [2]]\n", - "my_deep_copy: [[1], [2]]\n", - "after setting \"my_first_list[0][0] = 2\"\n", - "my_third_list: [[2], [2]]\n", - "my_shallow_copy: [[2], [2]]\n", - "my_deep_copy: [[1], [2]]\n" - ] - } - ], - "prompt_number": 13 - }, - { - "cell_type": "heading", - "level": 2, - "metadata": {}, - "source": [ - "Some Python oddity:" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "a = 1\n", - "b = 1\n", - "print('a is b', bool(a is b))\n", - "True\n", - "\n", - "a = 999\n", - "b = 999\n", - "print('a is b', bool(a is b))\n" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "a is b True\n", - "a is b False\n" - ] - } - ], - "prompt_number": 1 - } - ], - "metadata": {} - } - ] -} \ No newline at end of file diff --git a/.ipynb_checkpoints/timeit_test-checkpoint.ipynb b/.ipynb_checkpoints/timeit_test-checkpoint.ipynb deleted file mode 100644 index dd46362..0000000 --- a/.ipynb_checkpoints/timeit_test-checkpoint.ipynb +++ /dev/null @@ -1,628 +0,0 @@ -{ - "metadata": { - "name": "", - "signature": "sha256:5a2264b30b9632e14bd425a887a4455658fbdf9f8102fc5703ad982c3fa09b21" - }, - "nbformat": 3, - "nbformat_minor": 0, - "worksheets": [ - { - "cells": [ - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Sebastian Raschka \n", - "last updated: 04/14/2014 \n", - "\n", - "[Link to this IPython Notebook on GitHub](https://github.com/rasbt/python_reference/blob/master/timeit_test.ipynb)" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "# Python benchmarks via `timeit`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "# Sections\n", - "- [String operations](#string_operations)\n", - " - [String formatting: .format() vs. binary operator %s](#str_format_bin)\n", - " - [String reversing: [::-1] vs. `''.join(reversed())`](#str_reverse)\n", - " - [String concatenation: `+=` vs. `''.join()`](#string_concat)\n", - " - [Assembling strings](#string_assembly) \n", - "- [List operations](#list_operations)\n", - " - [List reversing: [::-1] vs. reverse() vs. reversed()](#list_reverse)\n", - " - [Creating lists using conditional statements](#create_cond_list)\n", - "- [Dictionary operations](#dict_ops) \n", - " - [Adding elements to a dictionary](#adding_dict_elements)" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "# String operations" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String formatting: `.format()` vs. binary operator `%s`\n", - "\n", - "We expect the string .format() method to perform slower than %, because it is doing the formatting for each object itself, where formatting via the binary % is hard-coded for known types. But let's see how big the difference really is..." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def test_format():\n", - " return ['{}'.format(i) for i in range(1000000)]\n", - "\n", - "def test_binaryop():\n", - " return ['%s' %i for i in range(1000000)]\n", - "\n", - "%timeit test_format()\n", - "%timeit test_binaryop()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1 loops, best of 3: 400 ms per loop\n", - "1 loops, best of 3: 241 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 3 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String reversing: `[::-1]` vs. `''.join(reversed())`" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def reverse_join(my_str):\n", - " return ''.join(reversed(my_str))\n", - " \n", - "def reverse_slizing(my_str):\n", - " return my_str[::-1]\n", - "\n", - "\n", - "# Test to show that both work\n", - "a = reverse_join('abcd')\n", - "b = reverse_slizing('abcd')\n", - "assert(a == b and a == 'dcba')\n", - "\n", - "%timeit reverse_join('abcd')\n", - "%timeit reverse_slizing('abcd')\n", - "\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.4 GHz Intel Core Duo\n", - "# 8 GB 1067 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 1.28 \u00b5s per loop\n", - "1000000 loops, best of 3: 337 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 13 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String concatenation: `+=` vs. `''.join()`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Strings in Python are immutable objects. So, each time we append a character to a string, it has to be created \u201cfrom scratch\u201d in memory. Thus, the answer to the question \u201cWhat is the most efficient way to concatenate strings?\u201d is a quite obvious, but the relative numbers of performance gains are nonetheless interesting." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def string_add(in_chars):\n", - " new_str = ''\n", - " for char in in_chars:\n", - " new_str += char\n", - " return new_str\n", - "\n", - "def string_join(in_chars):\n", - " return ''.join(in_chars)\n", - "\n", - "test_chars = ['a', 'b', 'c', 'd', 'e', 'f']\n", - "\n", - "%timeit string_add(test_chars)\n", - "%timeit string_join(test_chars)\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 595 ns per loop\n", - "1000000 loops, best of 3: 269 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 16 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Assembling strings\n", - "\n", - "Next, I wanted to compare different methods string \u201cassembly.\u201d This is different from simple string concatenation, which we have seen in the previous section, since we insert values into a string, e.g., from a variable." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def plus_operator():\n", - " return 'a' + str(1) + str(2) \n", - " \n", - "def format_method():\n", - " return 'a{}{}'.format(1,2)\n", - " \n", - "def binary_operator():\n", - " return 'a%s%s' %(1,2)\n", - "\n", - "%timeit plus_operator()\n", - "%timeit format_method()\n", - "%timeit binary_operator()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 764 ns per loop\n", - "1000000 loops, best of 3: 494 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000000 loops, best of 3: 79.3 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 17 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "# List operations" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## List reversing - `[::-1]` vs. `reverse()` vs. `reversed()`" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def reverse_func(my_list):\n", - " new_list = my_list[:]\n", - " new_list.reverse()\n", - " return new_list\n", - " \n", - "def reversed_func(my_list):\n", - " return list(reversed(my_list))\n", - "\n", - "def reverse_slizing(my_list):\n", - " return my_list[::-1]\n", - "\n", - "%timeit reverse_func([1,2,3,4,5])\n", - "%timeit reversed_func([1,2,3,4,5])\n", - "%timeit reverse_slizing([1,2,3,4,5])\n", - "\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.4 GHz Intel Core Duo\n", - "# 8 GB 1067 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 930 ns per loop\n", - "1000000 loops, best of 3: 1.89 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "1000000 loops, best of 3: 775 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 1 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Creating lists using conditional statements\n", - "\n", - "In this test, I attempted to figure out the fastest way to create a new list of elements that meet a certain criterion. For the sake of simplicity, the criterion was to check if an element is even or odd, and only if the element was even, it should be included in the list. For example, the resulting list for numbers in the range from 1 to 10 would be \n", - "[2, 4, 6, 8, 10].\n", - "\n", - "Here, I tested three different approaches: \n", - "1) a simple for loop with an if-statement check (`cond_loop()`) \n", - "2) a list comprehension (`list_compr()`) \n", - "3) the built-in filter() function (`filter_func()`) \n", - "\n", - "Note that the filter() function now returns a generator in Python 3, so I had to wrap it in an additional list() function call." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def cond_loop():\n", - " even_nums = []\n", - " for i in range(100):\n", - " if i % 2 == 0:\n", - " even_nums.append(i)\n", - " return even_nums\n", - "\n", - "def list_compr():\n", - " even_nums = [i for i in range(100) if i % 2 == 0]\n", - " return even_nums\n", - " \n", - "def filter_func():\n", - " even_nums = list(filter((lambda x: x % 2 != 0), range(100)))\n", - " return even_nums\n", - "\n", - "%timeit cond_loop()\n", - "%timeit list_compr()\n", - "%timeit filter_func()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "100000 loops, best of 3: 14.4 \u00b5s per loop\n", - "100000 loops, best of 3: 12 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000 loops, best of 3: 23.9 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 14 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "# Dictionary operations " - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Adding elements to a Dictionary\n", - "\n", - "All three functions below count how often different elements (values) occur in a list. \n", - "E.g., for the list ['a', 'b', 'a', 'c'], the dictionary would look like this: \n", - "`my_dict = {'a': 2, 'b': 1, 'c': 1}`" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import random\n", - "import copy\n", - "import timeit\n", - "\n", - "\n", - "\n", - "def add_element_check1(my_dict, elements):\n", - " for e in elements:\n", - " if e not in my_dict:\n", - " my_dict[e] = 1\n", - " else:\n", - " my_dict[e] += 1\n", - " \n", - "def add_element_check2(my_dict, elements):\n", - " for e in elements:\n", - " if e not in my_dict:\n", - " my_dict[e] = 0\n", - " my_dict[e] += 1 \n", - "\n", - "def add_element_except(my_dict, elements):\n", - " for e in elements:\n", - " try:\n", - " my_dict[e] += 1\n", - " except KeyError:\n", - " my_dict[e] = 1\n", - " \n", - "\n", - "random.seed(123)\n", - "rand_ints = [random.randrange(1, 10) for i in range(100)]\n", - "empty_dict = {}\n", - "\n", - "print('Results for 100 integers in range 1-10') \n", - "%timeit add_element_check1(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_check2(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_except(copy.deepcopy(empty_dict), rand_ints)\n", - " \n", - "print('\\nResults for 1000 integers in range 1-10') \n", - "rand_ints = [random.randrange(1, 10) for i in range(1000)]\n", - "empty_dict = {}\n", - "\n", - "%timeit add_element_check1(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_check2(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_except(copy.deepcopy(empty_dict), rand_ints)\n", - "\n", - "print('\\nResults for 1000 integers in range 1-1000') \n", - "rand_ints = [random.randrange(1, 10) for i in range(1000)]\n", - "empty_dict = {}\n", - "\n", - "%timeit add_element_check1(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_check2(copy.deepcopy(empty_dict), rand_ints)\n", - "%timeit add_element_except(copy.deepcopy(empty_dict), rand_ints)\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "Results for 100 integers in range 1-10\n", - "100000 loops, best of 3: 16.6 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 17.6 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "100000 loops, best of 3: 17.9 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "Results for 1000 integers in range 1-10\n", - "10000 loops, best of 3: 135 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000 loops, best of 3: 125 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000 loops, best of 3: 105 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "\n", - "Results for 1000 integers in range 1-1000\n", - "10000 loops, best of 3: 122 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000 loops, best of 3: 123 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000 loops, best of 3: 104 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 13 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "### Conclusion\n", - "Interestingly, the `try-except` loop pays off if we have more elements (here: 1000 integers instead of 100) as dictionary keys to check. Also, it doesn't matter much whether the elements exist or do not exist in the dictionary, yet." - ] - } - ], - "metadata": {} - } - ] -} \ No newline at end of file diff --git a/.ipynb_checkpoints/timeit_tests-checkpoint.ipynb b/.ipynb_checkpoints/timeit_tests-checkpoint.ipynb deleted file mode 100644 index d1bc680..0000000 --- a/.ipynb_checkpoints/timeit_tests-checkpoint.ipynb +++ /dev/null @@ -1,1883 +0,0 @@ -{ - "metadata": { - "name": "", - "signature": "sha256:75d807f509bd9f76b2e14a5a048cb44852a3318bcd0d95afc95d1c9b2904c078" - }, - "nbformat": 3, - "nbformat_minor": 0, - "worksheets": [ - { - "cells": [ - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Sebastian Raschka \n", - "last updated: 04/14/2014 \n", - "\n", - "[Link to this IPython Notebook on GitHub](https://github.com/rasbt/python_reference/blob/master/benchmarks/timeit_tests.ipynb)" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "

\n", - "I am really looking forward to your comments and suggestions to improve and extend this collection! Just send me a quick note \n", - "via Twitter: [@rasbt](https://twitter.com/rasbt) \n", - "or Email: [bluewoodtree@gmail.com](mailto:bluewoodtree@gmail.com)\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "# Python benchmarks via `timeit`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "# Sections\n", - "- [String operations](#string_operations)\n", - " - [String formatting: .format() vs. binary operator %s](#str_format_bin)\n", - " - [String reversing: [::-1] vs. `''.join(reversed())`](#str_reverse)\n", - " - [String concatenation: `+=` vs. `''.join()`](#string_concat)\n", - " - [Assembling strings](#string_assembly) \n", - " - [Testing if a string is an integer](#is_integer)\n", - " - [Testing if a string is a number](#is_number)\n", - "- [List operations](#list_operations)\n", - " - [List reversing: [::-1] vs. reverse() vs. reversed()](#list_reverse)\n", - " - [Creating lists using conditional statements](#create_cond_list)\n", - "- [Dictionary operations](#dict_ops) \n", - " - [Adding elements to a dictionary](#adding_dict_elements)\n", - "- [Comprehensions vs. for-loops](#comprehensions)\n", - "- [Copying files by searching directory trees](#find_copy)\n", - "- [Returning column vectors slicing through a numpy array](#row_vectors)\n", - "- [Speed of numpy functions vs Python built-ins and std. lib.](#numpy)" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "# String operations" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String formatting: `.format()` vs. binary operator `%s`\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "We expect the string .format() method to perform slower than %, because it is doing the formatting for each object itself, where formatting via the binary % is hard-coded for known types. But let's see how big the difference really is..." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def test_format():\n", - " return ['{}'.format(i) for i in range(1000000)]\n", - "\n", - "def test_binaryop():\n", - " return ['%s' %i for i in range(1000000)]\n", - "\n", - "%timeit test_format()\n", - "%timeit test_binaryop()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1 loops, best of 3: 400 ms per loop\n", - "1 loops, best of 3: 241 ms per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 3 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String reversing: `[::-1]` vs. `''.join(reversed())`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def reverse_join(my_str):\n", - " return ''.join(reversed(my_str))\n", - " \n", - "def reverse_slizing(my_str):\n", - " return my_str[::-1]\n", - "\n", - "\n", - "# Test to show that both work\n", - "a = reverse_join('abcd')\n", - "b = reverse_slizing('abcd')\n", - "assert(a == b and a == 'dcba')\n", - "\n", - "%timeit reverse_join('abcd')\n", - "%timeit reverse_slizing('abcd')\n", - "\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.4 GHz Intel Core Duo\n", - "# 8 GB 1067 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 1.28 \u00b5s per loop\n", - "1000000 loops, best of 3: 337 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 13 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## String concatenation: `+=` vs. `''.join()`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Strings in Python are immutable objects. So, each time we append a character to a string, it has to be created \u201cfrom scratch\u201d in memory. Thus, the answer to the question \u201cWhat is the most efficient way to concatenate strings?\u201d is a quite obvious, but the relative numbers of performance gains are nonetheless interesting." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def string_add(in_chars):\n", - " new_str = ''\n", - " for char in in_chars:\n", - " new_str += char\n", - " return new_str\n", - "\n", - "def string_join(in_chars):\n", - " return ''.join(in_chars)\n", - "\n", - "test_chars = ['a', 'b', 'c', 'd', 'e', 'f']\n", - "\n", - "%timeit string_add(test_chars)\n", - "%timeit string_join(test_chars)\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 595 ns per loop\n", - "1000000 loops, best of 3: 269 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 16 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Assembling strings\n", - "\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "Next, I wanted to compare different methods string \u201cassembly.\u201d This is different from simple string concatenation, which we have seen in the previous section, since we insert values into a string, e.g., from a variable." - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def plus_operator():\n", - " return 'a' + str(1) + str(2) \n", - " \n", - "def format_method():\n", - " return 'a{}{}'.format(1,2)\n", - " \n", - "def binary_operator():\n", - " return 'a%s%s' %(1,2)\n", - "\n", - "%timeit plus_operator()\n", - "%timeit format_method()\n", - "%timeit binary_operator()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 764 ns per loop\n", - "1000000 loops, best of 3: 494 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000000 loops, best of 3: 79.3 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 17 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Testing if a string is an integer" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def string_is_int(a_str):\n", - " try:\n", - " int(a_str)\n", - " return True\n", - " except ValueError:\n", - " return False\n", - "\n", - "an_int = '123'\n", - "no_int = '123abc'\n", - "\n", - "%timeit string_is_int(an_int)\n", - "%timeit string_is_int(no_int)\n", - "%timeit an_int.isdigit()\n", - "%timeit no_int.isdigit()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 401 ns per loop\n", - "100000 loops, best of 3: 3.04 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000000 loops, best of 3: 92.1 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "10000000 loops, best of 3: 96.3 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 5 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

\n" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## Testing if a string is a number" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def string_is_number(a_str):\n", - " try:\n", - " float(a_str)\n", - " return True\n", - " except ValueError:\n", - " return False\n", - " \n", - "a_float = '1.234'\n", - "no_float = '123abc'\n", - "\n", - "a_float.replace('.','',1).isdigit()\n", - "no_float.replace('.','',1).isdigit()\n", - "\n", - "%timeit string_is_number(an_int)\n", - "%timeit string_is_number(no_int)\n", - "%timeit a_float.replace('.','',1).isdigit()\n", - "%timeit no_float.replace('.','',1).isdigit()\n", - "\n", - "#\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.5 GHz Intel Core i5\n", - "# 4 GB 1600 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 400 ns per loop\n", - "1000000 loops, best of 3: 1.15 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "1000000 loops, best of 3: 452 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "1000000 loops, best of 3: 394 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 6 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "# List operations" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "

" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "## List reversing - `[::-1]` vs. `reverse()` vs. `reversed()`" - ] - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "[[back to top](#sections)]" - ] - }, - { - "cell_type": "code", - "collapsed": false, - "input": [ - "import timeit\n", - "\n", - "def reverse_func(my_list):\n", - " new_list = my_list[:]\n", - " new_list.reverse()\n", - " return new_list\n", - " \n", - "def reversed_func(my_list):\n", - " return list(reversed(my_list))\n", - "\n", - "def reverse_slizing(my_list):\n", - " return my_list[::-1]\n", - "\n", - "%timeit reverse_func([1,2,3,4,5])\n", - "%timeit reversed_func([1,2,3,4,5])\n", - "%timeit reverse_slizing([1,2,3,4,5])\n", - "\n", - "# Python 3.4.0\n", - "# MacOS X 10.9.2\n", - "# 2.4 GHz Intel Core Duo\n", - "# 8 GB 1067 Mhz DDR3\n", - "#" - ], - "language": "python", - "metadata": {}, - "outputs": [ - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "1000000 loops, best of 3: 930 ns per loop\n", - "1000000 loops, best of 3: 1.89 \u00b5s per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n", - "1000000 loops, best of 3: 775 ns per loop" - ] - }, - { - "output_type": "stream", - "stream": "stdout", - "text": [ - "\n" - ] - } - ], - "prompt_number": 1 - }, - { - "cell_type": "markdown", - "metadata": {}, - "source": [ - "\n", - "

\n", - "